A list of AI agents and robots to block on your server.

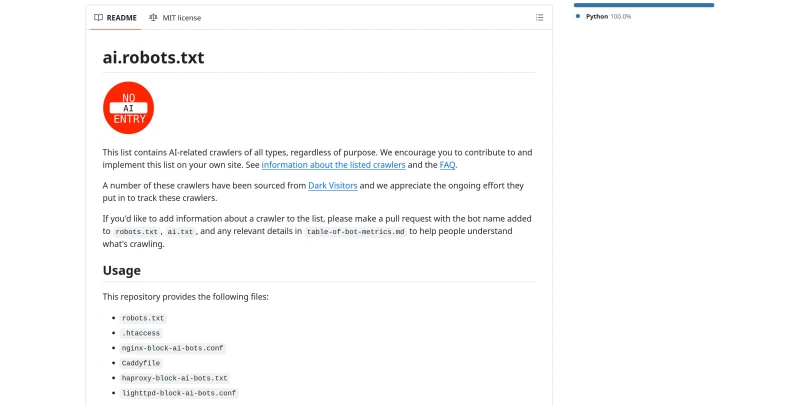

ai.robots.txt is an open-source, community-driven repository that maintains a comprehensive list of AI-related web crawlers and robots designed to be blocked by website owners.

The main features of this tool include:

Comprehensive Bot Database: The list contains AI-related crawlers of all types, regardless of their specific purpose, with many entries sourced from Dark Visitors for up-to-date tracking.

Multi-Platform Configuration Files: To simplify implementation, the project provides ready-to-use files for various web servers and load balancers, including robots.txt, .htaccess (Apache), Nginx, Caddy, HAProxy, and Lighttpd.

Automated Update System: The repository uses GitHub Actions to automatically generate and update all configuration files whenever the central

robots.jsonlist is modified by the community.Support for Content Licensing: Beyond simple blocking, it supports the Really Simple Licensing (RSL) standard, enabling site owners to license their content to AI companies.

Abuse Reporting and Security: It provides tools to report abusive crawlers that do not respect the Robots Exclusion Protocol (RFC 9309) and offers guidance on using Cloudflare's hard block alongside the list.

Flexible Subscription Options: Users can stay updated on new bot additions by subscribing to RSS/Atom feeds or via GitHub's built-in release notifications.

Community Contribution: As an open-source project under the MIT license, it encourages developers to submit pull requests to add new bots or metrics to help the community understand what is crawling their sites.

You may also be interested in ...

MuJS

TiniJS